ROGER D HARRIS and SARA FLOUNDERS challenge propaganda against the blockaded socialist island

In the second and final part of his article MIKE SCOTT posits that if we don’t control AI while we’ve got the chance, we could be signing the death warrant for our children and grandchildren

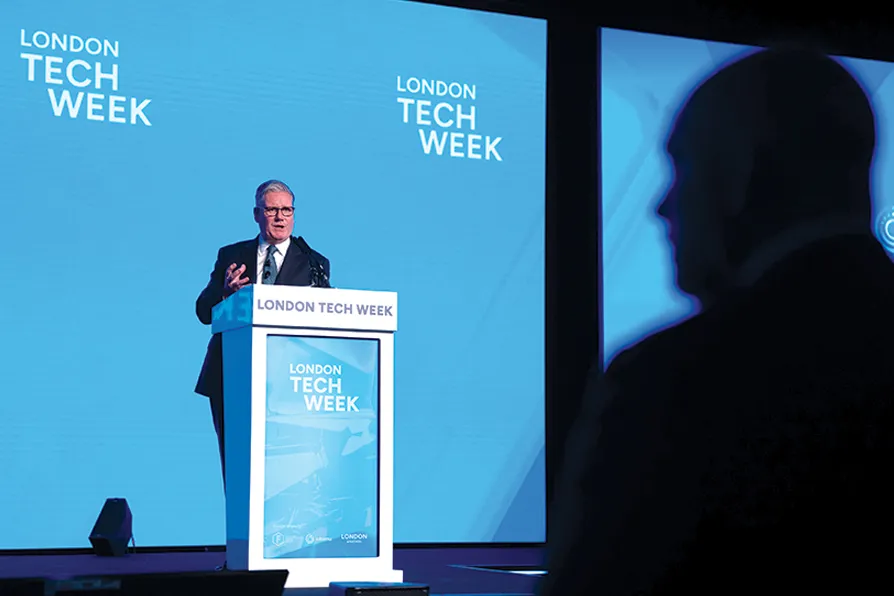

BRAVE NEW WORLD? Keir Starmer at the London Tech Week, in June 2025, where he announced the TechFirst programme for secondary school pupils to be taught skills in artificial intelligence (AI)

BRAVE NEW WORLD? Keir Starmer at the London Tech Week, in June 2025, where he announced the TechFirst programme for secondary school pupils to be taught skills in artificial intelligence (AI)

THE threat to jobs is already with us, but a range of other concerns about AI have also come to light over the past three years of breakneck development, some of which have been given more publicity than others.

The mainstream media has been full of stories of teachers complaining about students getting AI to write their essays and authors have been up in arms about their work being used to train AI systems.

Books have appeared by non-existent authors and music by non-existent musicians. Social media campaigns by right-wing conspiracy theorists have been generated and reinforced by multiple non-human posts.

Depressed young people are actively encouraged to kill themselves. Israeli army targets are selected in Gaza and elsewhere. And there’ll be lots more of this to come as AI continues to develop.

One area that has received relatively little attention is the environmental impact of AI.

The data centres needed to power the various activities that politicians and companies are planning will need enormous amounts of electricity. Starmer has spoken of a swath of data centres across the country, but hasn’t explained where the electricity is going to come from.

If all of these are built, they will need as much electricity as is currently used by the entire country.

The years of underfunding since Thatcher’s privatisation of the utilities have left the national grid in a poor condition. It can’t even accept the additional power generated by domestic solar panels or private windmills.

And the move towards all-electric cars will mean a vast increase in chargers without the power to feed them, while the massive amounts of water needed to cool the data centres will be drawn from diminishing reserves and half-empty reservoirs. No prizes for guessing who will be at the back of the queue for what water is available.

But, with all of this to worry about, the biggest concern of all is where AI is going to end up? What is it going to be capable of further down the line?

At the moment, it is simply a set of software codes written by humans to do specific tasks. If it doesn’t work properly – and it often doesn’t – it can be switched off and amended. But ahead lies the techie goal of “superintelligence” — science fiction three years ago, but now firmly on the horizon.

Superintelligence will mean that AI has developed to such an extent that it can make its own decisions and write its own code. Just stop and think about that for a moment. It means that it will be able to develop itself without human intervention and nobody – but nobody – has the slightest idea what criteria it will use to do that. It will be in control and completely beyond human intervention. No-one will be able to turn it off.

Nearly a hundred years ago, the science fiction author Isaac Asimov wrote a story about robots and how they would need to be controlled. He invented the Three Laws of Robotics, which were:

• A robot may not injure a human being or, through inaction, allow a human being to come to harm.

• A robot must obey orders given to it by human beings, except where such orders would conflict with the First Law.

• A robot must protect its own existence as long as such protection does not conflict with the First or Second Law

These laws were designed to ensure that robots couldn’t decide to turn on humanity. But there are no equivalent laws protecting humanity from AI.

Here are some more quotes to think about:

(Dario Amodei – Anthropic AI) “Humanity is about to be handed almost unimaginable power and it is deeply unclear whether our social, political and technological systems possess the maturity to wield it. The world is considerably closer to real danger (from AI) in 2026 than it was in 2023…it will test who we are as a species.”

(Sam Altman - CEO of Open AI) “No-one knows what happens next…there’s clearly real risks…all we know right now is that we have discovered something extraordinary that is going to reshape the course of our history.”

(Anonymous former Open AI employee) “There are people who work at the frontier AI companies who earnestly believe there is a chance their company will contribute to the end of the world.”

(Anonymous computer programmer and AI expert) “An entity which is superhuman in its general intelligence, unless it wants exactly what we want, represents a terrible risk to us. If there weren’t the commercial incentives to rush to market and the billions of dollars at stake, then maybe in 15 years we could develop something that we could be confident was controllable and safe. But it’s going much too fast for that.”

(Yoshua Bengio — AI pioneer) “Frontier AI models already show signs of self-preservation…as their capabilities and degree of agency grow, we need to make sure we can shut them down if needed.”

What all this means in practice is that AI should and must be treated as even more dangerous than nuclear weapons.

There needs to be an international campaign to regulate it and make sure it does what is good for humanity, not for the “let’s see how far we can go” techies. Some of these are already seriously suggesting that Superintelligent AI should be treated as if it was human and protected from any intervention that might harm it.

We don’t have to accept all new inventions as good and we don’t have to let tech entrepreneurs do what they like.

In the last century, there were quite a few things that should never have got off the drawing board: Zyklon B poison gas, nuclear weapons and napalm, for a start.

In the 21st century, we are already on the edge of disaster and this could push us over.

If we don’t control AI while we’ve got the chance, we could be signing the death warrant for our children and grandchildren.

Keir Starmer may want to “mainline AI into the veins of the economy” but do you think we should really be taking the risk?

It’s time for the left and the unions to take the dangers of AI seriously and begin campaigning now for effective regulation. If we don’t, there’s no sign anyone else will and it won’t be long before it’s too late.

Mike Scott is a retired trade union organiser and chair of Nottingham Socialist Club. Read part 1 of his article here.

MIKE SCOTT assesses the AI threat to jobs in the first of a pair of articles on the problems it poses

CARL DEATH introduces a new book which explores how African science fiction is addressing climate change

NICOLA SARAH HAWKINS explains how an under-regulated introduction of AI into education is already exacerbating inequalities